In mathematics, percolation

theory describes the behaviour of connected clusters in a random graph.

Percolation problem is explained

as:

Assume that some liquid is poured

on top of some porous material. Will the liquid be able to make its way from

hole to hole and reach the bottom? This physical question is modelled

mathematically as a three-dimensional network of n × n × n points (or vertices/sites)

the connections (or edges/bonds) between each two neighbours may be open

(allowing the liquid through) with probability ‘p’, or closed with probability

‘1 – p’, and they are assumed to be independent. Therefore, for a given p, what

is the probability that an open path exists from the top to the bottom? The

behaviour for large n is of primary interest. This problem is known nowadays to

be bond-percolation.

Related problems:

- How far from each other should trees in an or-chard (forest) be planted in order to minimize the spread of blight (fire)?

- How infectious does a strain of flu have to be to create a pandemic? What is the expected size of an outbreak?

- Applicable in Network Robustness and Fragility.

Why percolation:

- Percolation provides a very simple model of random media that nevertheless retains enough realism to make its predictions relevant in applications.

- It is a test ground for studying more complicated critical phenomena and a great source of intuition.

2D bond-percolation:

- Stone: a large two dimensional grid of channels (edges). Edges in the grid are open or present with probability p (0 ≤ p ≤ 1) and closed or absent with probability 1 − p.

- Pores: open edges and p determines the porosity of the stone.

Bond-percolation:

- The space of the model is Zn or any infinite graph.

- The edges are open or closed with probability p, which may depend on the properties of the edge (e.g. degree).

- Open cluster is a connected component of the open edge graph.

- The network is said to percolate if there is an infinite open cluster containing the origin.

If the graph

is translation invariant there is no difference between the origin and any

other vertex.

Site-percolation:

- The space of the model is Zn or any infinite graph.

- The vertices are open or closed with probability p, which may depend on the properties of the vertex (e.g. degree).

- Open cluster is a connected component of the open vertex graph.

- The network is said to percolate if there is an infinite open cluster containing the origin.

Every bond percolation

problem can be realized as a site percolation problem (on a different graph).

The converse is not true.

A simple visual example to

illustrate the basic structure.

Basic Results:

Quantities of Interest:

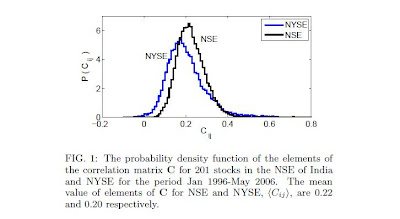

- |C| — the size of the open cluster at 0, where C stands for the open cluster itself;

- ¥(p) — percolation probability, defined as ¥(p) = PP(|C| = ∞);

- pc(d) — critical probability, defined as pc(d) = sup{p : ¥(p) = 0};

- X(p) — the mean size of the open cluster at the origin, defined as X(p) = Ep[|C|];

- Xf(p) — the mean size of the finite open cluster at the origin, defined as

Xf(p)

=

Ep[|C| : |C| < ∞];

and believed dependency of ¥(p) vs p is (continuous)

Percolation thus has three distinct phases

1) Sub-critical if p < pc

2) Critical if p = pc

3) Super-critical if p > pc

Critical phase:

Theorem: If d ≥ 2 then 0 < pc(d) < 1.

The exact value of pc(d)

is known only for a few special cases:

- pbondc (1) = psitec (1) = 1

- pbondc (2) = 1/2, psitec (2) ≈ .59

- pbondc (triangular lattice) = 2 sin(π/18)

- pbondc (hexagonal lattice) = 1 − 2 sin(π/18)

Theorem: Probability

that an infinite open cluster exists is 0 if p < pc(d) and 1 if p

> pc(d).

It is known that no infinite open cluster exists for p = pc(d)

if d = 2 or d ≥ 19.

Some bounds on the critical probability are known.

Theorem: If G is

an infinite connected graph and maximum vertex degree Ϫ < ∞. The critical

probabilities of G satisfy

In particular, pbondc

≤ psitec and

strict inequality holds for a broad family of graphs.

Subcritical Phase:

If p < pc all open clusters are finite with probability

1.

Theorem:

Probability of a cluster of size n at 0 decreases exponentially with n. More

precisely, there exists α(p) > 0, α(p) → ∞ as p → 0 and α(pc)

= 0 such that

This also implies that X(p) is finite for all p in the

subcritical region.

Theorem:

Probability distribution for cluster radii decays exponentially with the

radius, i.e.

where

ξ (p)

— the characteristic length of exponential decay — is the mean cluster radius.

Supercritical Phase:

If p > pc, with probability 1 at least one

infinite open cluster exists.

Theorem: The

infinite open cluster is unique with probability 1.

Theorem:

Probability of a finite open cluster of size n at 0 decreases exponentially

with n. More precisely, there exist functions β1(p) and β2(p),

satisfying 0 < β2(p) ≤ β1(p) < ∞, such that

Because X(p) is infinite for p > pc the truncated mean —

Xf(p) — over finite clusters only is considered.

The general shape of X(p) is believed to be as follows: